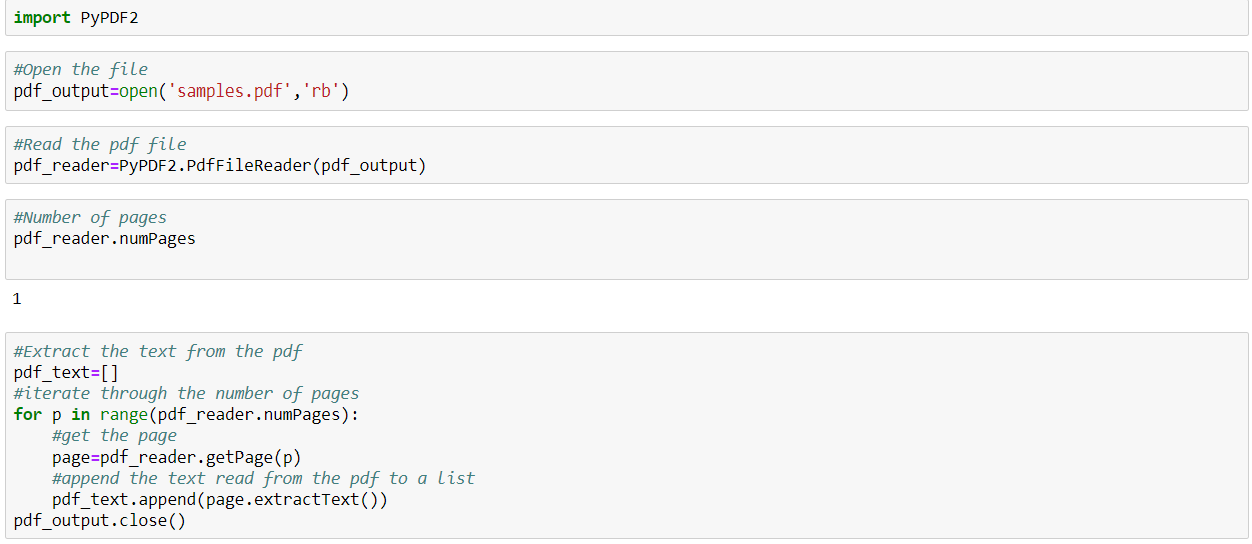

#Pdf text extractor python pdfWe must save the PDF as an object before we can start using PyPDF2 on it.

#Pdf text extractor python install!pip install PyPDF2 import PyPDF2īefore we move to the next step make sure you have loaded the PDF document into the file repository on the left of the colab environment. This library isn’t pre-installed in the Google colab environment so we will have to install it before importing the PyPDF2 into our code. PyPDF2 can do much more than just extract text and if you are curious about its other capabilities, you can read about them here. The library we will use to extract the PDF text is called PyPDF2. #Pdf text extractor python codeNote: The following code explanation is designed for the Google colab environment. With the PDF and text identified let’s move on to using python to extract the Executive Summary. For the purpose of this post, I am only going to focus on extracting the text from the Executive Summary on pages xii and xiii. If you open the link to the PDF you will find a long report with many pages and figures. You can also use it to create a recommender system for resumes for jobs.Following the theme of my last post, I’m going to use another PDF focused on Indonesia’s current energy situation with the Indonesia Energy Outlook 2019 Report published by the Secretariat General of the National Energy Council. Store it in a spreadsheet if you want to make the PDF searchable or parse a lot of files and conduct a cluster analysis. Now you have keywords for your file stored as a list. stop_words = stopwords.words('english') #We create a list comprehension that only returns a list of words that are NOT IN stop_words and NOT IN punctuations. punctuations = ',','] #We initialize the stopwords variable, which is a list of words like "The," "I," "and," etc. tokens = word_tokenize(text) #We'll create a new list that contains punctuation we wish to clean. Step 3: Convert text into keywords #The word_tokenize() function will break our text phrases into individual words. #Now, we will clean our text variable and return it as a list of keywords. It likely contains a lot of spaces, possibly junk such as '\n,' etc. Type print(text) to see what it contains. else: text = textract.process(fileurl, method='tesseract', language='eng') #Now we have a text variable that contains all the text derived from our PDF file. if text != "": text = text #If the above returns as False, we run the OCR library textract to #convert scanned/image based PDF files into text. It's done because PyPDF2 cannot read scanned files. while count < num_pages: pageObj = pdfReader.getPage(count) count =1 text = pageObj.extractText() #This if statement exists to check if the above library returned words. num_pages = pdfReader.numPages count = 0 text = "" #The while loop will read each page. pdfReader = PyPDF2.PdfFileReader(pdfFileObj) #Discerning the number of pages will allow us to parse through all the pages.

pdfFileObj = open(filename,'rb') #The pdfReader variable is a readable object that will be parsed. filename = ' enter the name of the file here' #open allows you to read the file. Step 1: Import all libraries import PyPDF2 import textract from nltk.tokenize import word_tokenize from rpus import stopwords Step 2: Read PDF file #Write a for-loop to open many files (leave a comment if you'd like to learn how). Start up your favorite editor and type: Note: All lines starting with # are comments.

In order to do this, make sure your PDF file is stored within the folder where you’re writing your script. #Pdf text extractor python downloadThis will download the libraries you require to parse PDF documents and extract keywords.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed